Microservices vs Monolith: How to Choose Based on Your Team Size

Microservices vs monolith: a practical comparison based on team size, organisational maturity, and the real cost of premature decomposition.

In this article:

- The false dichotomy in the microservices vs monolith debate

- When a monolith is the correct architectural choice

- When microservices make sense

- The cost of premature decomposition

- Monolith decomposition using the strangler fig pattern

- Conclusion

The microservices vs monolith debate has consumed enormous engineering energy over the last decade. Microservices became synonymous with modern architecture, and monoliths became associated with legacy systems that teams were ashamed of. Neither association is accurate. The right architecture depends on team size, organisational structure, system complexity, and the maturity of the delivery process. Choosing microservices before the organisation is ready for them creates distributed complexity that is harder to manage than the monolith it replaced. This article provides a framework for making the choice based on actual constraints rather than architectural fashion.

The False Dichotomy in the Microservices vs Monolith Debate

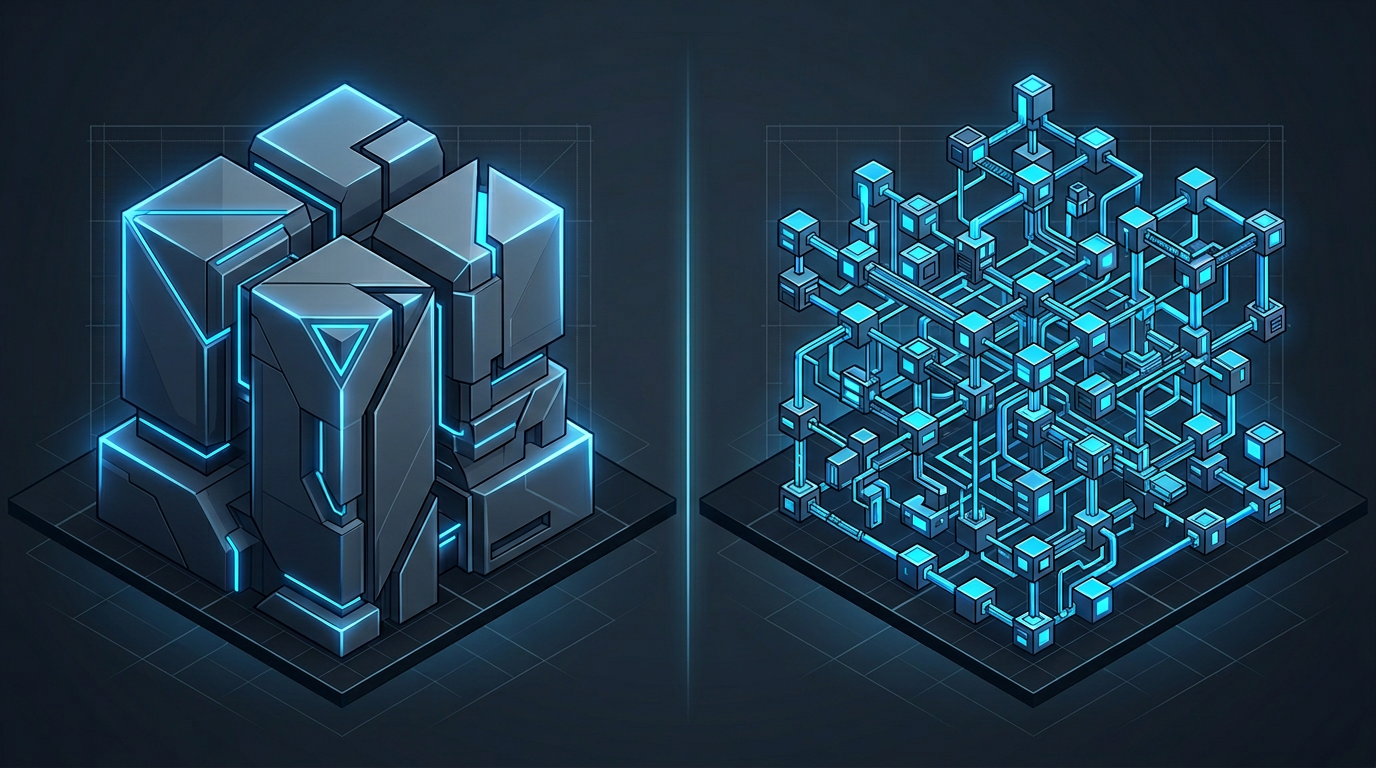

The framing of microservices versus monolith implies two discrete options. In reality, the spectrum of software architecture patterns includes modular monoliths, service-oriented architectures, and various hybrid approaches that do not fit neatly into either category.

A modular monolith is a single deployable unit with well-defined internal module boundaries. It deploys as one process but has the internal structure of a service-oriented system. This architecture is often the correct intermediate step for teams that have outgrown a big ball of mud monolith but are not yet ready for the operational overhead of microservices.

The choice between monolith and microservices is also not permanent. Many successful microservices architectures started as monoliths and were decomposed incrementally as the team grew and the system’s scalability requirements became clearer. Starting with a microservices architecture before the domain boundaries are understood often results in the wrong service boundaries, which are expensive to fix.

Conway’s Law states that organisations build systems that mirror their communication structures. This is an empirical observation with significant implications for architecture choice. A team of five engineers shipping a SaaS product does not have the communication overhead that microservices are designed to manage. They do not need service contracts and API versioning between their own engineers. The architecture should fit the organisation, not the other way around.

When a Monolith Is the Correct Architectural Choice

A monolith is the correct starting point for most new products and for teams below a certain size. The operational simplicity of a single deployable unit is significant. There is one CI pipeline, one deployment process, one place to look when something breaks, and one codebase to navigate.

Specific conditions that favour a monolith:

Team size under twenty engineers. Below this threshold, the communication overhead that microservices are designed to address does not exist in a meaningful way. Engineers can coordinate directly, shared code is not a bottleneck, and deployment coordination across services adds more friction than it removes.

Unclear domain boundaries. If the product is still evolving rapidly and the domain model is not stable, microservice boundaries defined today will be wrong in six months. A monolith allows boundaries to emerge through the code rather than requiring them to be decided upfront.

Limited infrastructure maturity. Microservices require service discovery, distributed tracing, centralised logging, and orchestration infrastructure. Teams without strong DevOps capability will spend more time managing infrastructure than building product.

Early-stage products. Speed of iteration matters most at the beginning. A well-structured monolith can be refactored. A poorly decomposed microservices architecture requires contract negotiations and coordinated deployments for every change.

When Microservices Make Sense

Microservices are appropriate when specific conditions are met. They are not appropriate simply because the company has reached a certain funding stage or because other companies are using them.

Independent scaling requirements. If one part of the system has significantly different scaling characteristics from the rest, isolating it as a service allows it to scale independently. This is a legitimate technical reason for decomposition.

Independent team ownership. When different teams own different parts of the product and need to deploy independently without coordinating with other teams, service boundaries that align with team boundaries enable autonomous deployment. This is Conway’s Law applied deliberately.

Regulatory or compliance isolation. Some systems require components that handle sensitive data to be deployed, audited, and secured separately from the rest of the system. Service boundaries can enforce these boundaries structurally.

Proven domain boundaries. Microservices work best when the domain model is stable and well-understood. If you have been running a monolith for years and the domain boundaries are clear, decomposing along those boundaries is lower risk than defining them from scratch.

The critical question is not whether microservices are better than a monolith in the abstract. It is whether the specific conditions that make microservices worthwhile are present in your organisation now.

The Cost of Premature Decomposition

Premature decomposition is one of the most expensive architectural mistakes a team can make. The cost is not just immediate. It compounds over time.

When a system is decomposed before the domain boundaries are understood, the wrong service boundaries are defined. Calls that should be in-process become network calls. Data that should be in one transaction spans multiple services. The distributed transactions problem, which microservices theoretically avoid by designing around it, appears in practice because the decomposition was done before the domain was understood.

The operational overhead of microservices is also underestimated. Each service needs its own deployment pipeline, its own monitoring, its own scaling configuration, and its own runbook. For a team of ten engineers managing fifteen services, a significant fraction of engineering capacity goes to operational overhead that would not exist in a monolith.

Teams that have decomposed prematurely often find themselves with a distributed monolith: services that are independently deployed but tightly coupled at the data and API level. This architecture has the operational complexity of microservices without the independence benefits. It is harder to change than the original monolith because changes now require coordinated deployments across multiple services.

Addressing this kind of architectural technical debt requires either re-consolidating services or carefully refactoring the coupling, both of which are expensive and disruptive.

Monolith Decomposition Using the Strangler Fig Pattern

For teams that have a working monolith and want to decompose it incrementally, the strangler fig pattern is the most reliable approach. It takes its name from a tree that grows around a host plant, gradually replacing it.

The pattern works as follows: identify a bounded context within the monolith that has clear inputs and outputs. Extract the functionality behind an interface while keeping the implementation inside the monolith. Gradually move the implementation to a new service while maintaining the interface. Once the implementation is fully external, remove the internal code.

This approach has several advantages over big-bang decomposition. The system continues to work throughout the migration. The service boundary is proven by the existing code before it is made permanent. Rollback is possible at any stage. And the team builds microservices experience incrementally rather than committing to a full decomposition before they understand the operational requirements.

The strangler fig pattern requires discipline. The interface must be maintained consistently during the migration, and the team must resist the temptation to let new features be built on both sides of the boundary. A clear migration plan with defined milestones and a legacy modernization approach prevents the migration from stalling halfway through.

Conclusion

The microservices vs monolith decision is a function of team size, organisational structure, domain maturity, and infrastructure capability. For most teams below twenty engineers with an evolving domain, a well-structured monolith or modular monolith is the correct choice. Microservices become appropriate when independent scaling, team autonomy, or compliance requirements justify the operational overhead.

When decomposition is the right move, the strangler fig pattern provides an incremental path that avoids the cost of premature decomposition while allowing the architecture to evolve with the organisation.

Does your codebase have these problems? Let’s talk about your system