Code Health: How to Assess and Improve Your Codebase Over Time

Learn how to measure code health using concrete metrics and build a continuous improvement process for your codebase.

In this article:

- What Code Health Actually Means

- The Core Code Health Metrics

- How to Run a Codebase Health Assessment

- Building a Continuous Improvement Process

- Conclusion

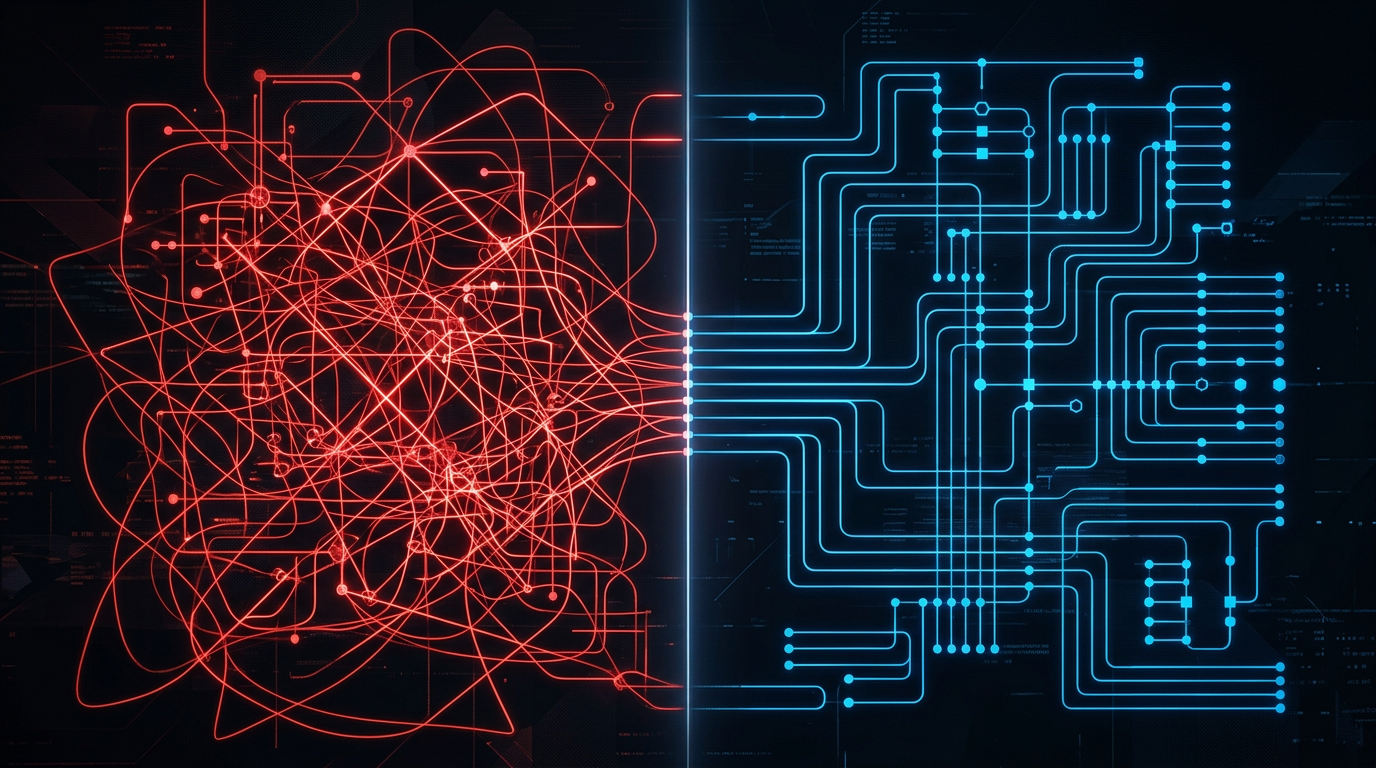

Code health is one of those terms that gets used in engineering conversations without a shared definition. Two engineers on the same team can disagree completely about whether a codebase is “healthy.” One looks at test coverage; the other looks at deployment frequency. Neither is wrong, but without a structured framework, decisions about where to invest engineering time remain subjective and inconsistent.

This guide defines code health using concrete, measurable signals. It explains which metrics matter, how to collect them, and how to build a process that improves your codebase over time rather than letting it degrade sprint by sprint. If your team ships features but accumulates technical debt metrics that no one tracks, this is the starting point.

What Code Health Actually Means

Code health refers to the degree to which a codebase supports fast, safe change. A healthy codebase lets engineers add features without fear, fix bugs quickly, and onboard new team members in days rather than weeks. An unhealthy one slows down every operation: PRs take longer, incidents are harder to diagnose, and deployments require coordination rituals that absorb hours.

The key insight is that code health is not about beauty or style. It is about feedback loops. A codebase with 40% test coverage but fast CI pipelines, clear module boundaries, and consistent patterns is often healthier in practice than one with 80% coverage and tangled dependencies everywhere.

Codebase health has three dimensions: structural quality (how the code is organized), operational quality (how the system behaves under change), and team velocity (how fast the team can work safely). Metrics exist for all three. The challenge is selecting the ones that predict real outcomes rather than ones that are easy to measure but misleading.

The Core Code Health Metrics

A useful software health score combines metrics across four categories.

Complexity. Cyclomatic complexity measures the number of independent paths through a function. A function with cyclomatic complexity above 15 is almost always a maintenance liability. Track the percentage of functions in your codebase above that threshold. Tools like SonarQube, CodeClimate, or language-specific linters report this automatically.

Test coverage and quality. Line coverage is a floor, not a ceiling. A module with 90% line coverage but no assertions on edge cases gives false confidence. More useful signals: mutation score (what percentage of injected bugs your tests catch), test execution time, and the ratio of unit to integration tests. A codebase where integration tests take 45 minutes to run produces slower feedback than one with 8-minute suite execution.

Duplication. Code duplication above 15-20% across a codebase indicates logic that has diverged in ways that will eventually cause inconsistency bugs. Token-based duplication detection (built into SonarQube and similar tools) finds structural copies that differ only in variable names.

Coupling and cohesion. High coupling between modules means a change in one place breaks things elsewhere. Measure this via instability and abstractness metrics (Martin’s metrics), or simply count the number of cross-module dependencies. A module that imports from 30 other modules is a warning sign regardless of its internal structure.

Change failure rate. This is an operational signal: what percentage of deployments cause an incident or require a rollback? Teams with low code health typically see change failure rates above 15%. Teams with mature codebases sit below 5%. This metric comes from your incident tracking system, not your static analysis tools.

How to Run a Codebase Health Assessment

A one-time assessment creates a baseline. Start by running static analysis tools against your entire codebase and capturing the current numbers for each metric above. Do not set targets yet; the goal is an honest picture.

Map the output to your modules or services. A monolith might reveal that 80% of complexity sits in three files that handle billing. A microservices architecture might show that one service accounts for 70% of all incidents. This mapping is where the assessment becomes actionable: you can now talk about specific files, modules, and teams rather than abstract scores.

Next, correlate metrics with operational data. Pull your incident log for the past 90 days and identify which files were touched in the commits that preceded each incident. This is the “hotspot” analysis: files that are both complex and frequently changed are your highest-priority technical debt. Files that are complex but rarely touched are lower priority.

Finally, document the assessment results in a format the whole engineering team can read. A radar chart works well for communicating the overall shape of your codebase health to leadership. A table of hotspots with complexity scores, change frequency, and incident correlation works better for the engineers who will do the work.

Learn more about how Eden Technologies structures these assessments on the tech debt solution page.

Building a Continuous Improvement Process

A single assessment is useful. A continuous process is what actually moves the needle on code health metrics over months.

The core principle is: make health visible in the development workflow, not just in quarterly reviews. This means integrating static analysis into your CI pipeline so every PR sees the current complexity, coverage, and duplication scores for the files it touches. Engineers should never need to go to a dashboard to find out whether a change improved or worsened code health.

Define a “boy scout rule” policy: every PR that touches a file must leave that file at least as healthy as it found it. This does not mean every PR needs a massive refactor. It means small, incremental improvements: extracting a function, adding a test, removing a dead code path. Tracked over six months, this approach reduces average cyclomatic complexity without dedicated refactoring sprints.

Set quarterly health targets at the team level, not the individual level. “Reduce the number of functions with cyclomatic complexity above 15 from 120 to 80 by end of quarter” is a team target. “Increase test coverage from 61% to 70%” is another. These targets feed into sprint planning as a first-class concern alongside feature work, not as an afterthought.

Review the hotspot map monthly. As your team ships features, the highest-complexity files shift. A file that was low risk three months ago may now be touched every sprint and accumulating new technical debt. Monthly reviews catch this drift before it becomes a crisis.

Conclusion

Code health is not a single number. It is a set of signals that, read together, tell you how much friction your codebase adds to every engineering decision your team makes. Tracking complexity, coverage, duplication, coupling, and change failure rate gives you a concrete picture of where that friction lives.

The goal is not a perfect score. It is a codebase that gets measurably better over time rather than silently worse. Teams that build this process typically see deployment frequency increase by 40-60% within two quarters, and change failure rates drop from double digits to under 5%.

Does your codebase have these problems? Let’s talk about your system