Canary Deployment: Safe Rollouts for High-Traffic Systems

How canary deployment works, how to configure traffic percentages, what metrics to monitor, and when to use canary vs. blue-green deployment.

In this article:

- What Canary Deployment Is and Why It Works

- Configuring Traffic Percentages and Progression

- What to Monitor During a Canary Rollout

- Canary Deployment and Feature Flags

- Conclusion

Canary deployment is a release strategy that routes a small percentage of production traffic to a new version before rolling it out to all users. The name comes from the practice of taking canary birds into coal mines: if the canary died, miners knew the air was unsafe. In software terms, if the canary deployment fails, the team knows before the failure affects the majority of users.

For high-traffic systems, canary deployment is the standard approach to releasing changes that carry meaningful risk. A bug that affects 5% of users on a canary is recoverable. The same bug deployed to 100% at once is a P1 incident.

What Canary Deployment Is and Why It Works

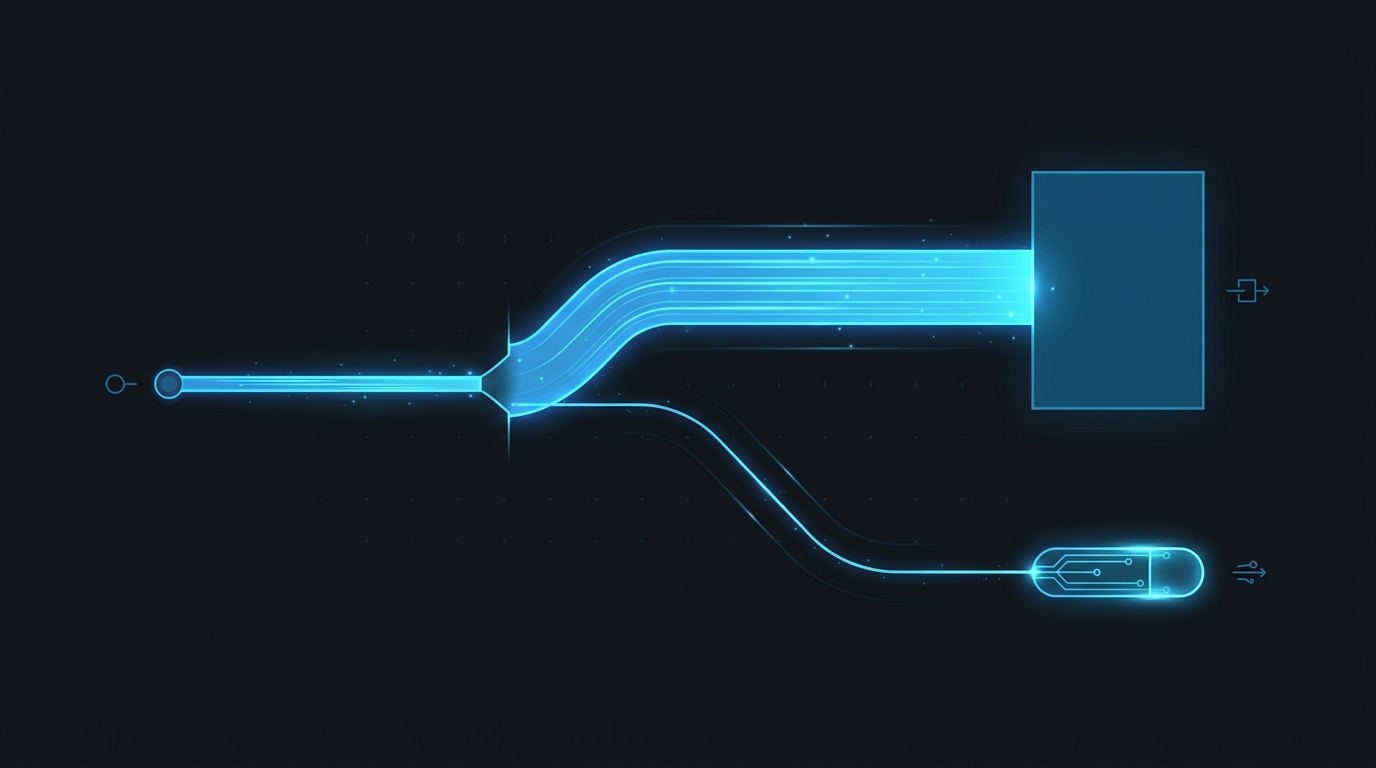

In a canary deployment, traffic is split between the current production version and the new version using weighted routing at the load balancer or service mesh level. A typical progression: 5% to canary for 30 minutes, then 25%, then 50%, then 100% (full rollout). If any step shows degradation in metrics, traffic shifts back to 0% on the canary and the team investigates.

The mechanism works because production bugs often have non-uniform impact across the user population. A regression that only triggers on specific browser versions, account types, or data patterns will manifest in the canary traffic at its actual rate of occurrence, rather than appearing suddenly across all users when the full rollout completes.

This is the key advantage over blue-green deployment for changes with uncertain behavior: blue-green is atomic, canary is gradual. The team gets to observe real production behavior at limited scale before committing to full rollout.

The change failure rate metric in DORA is directly influenced by canary deployment practices. Teams with automated canary pipelines typically see change failure rates below 5%. Teams that deploy directly to 100% of production without canary testing typically see rates above 15%.

Configuring Traffic Percentages and Progression

The traffic progression should be calibrated to the risk level of the change and the volume of traffic the system handles.

For high-traffic systems (millions of requests/day): Even 1% of traffic provides statistically significant signal within minutes. A typical progression for a high-risk change: 1% for 15 minutes, 5% for 30 minutes, 25% for 30 minutes, 100%. This full progression takes about 90 minutes and provides solid confidence at each step.

For moderate-traffic systems: Start at 5-10% and observe for longer periods before advancing. With lower traffic volume, you need more time at each percentage to accumulate enough requests to distinguish signal from noise.

For low-traffic systems (under 100 requests/day): Canary deployment provides limited statistical value at low traffic volumes. Consider blue-green deployment with comprehensive smoke tests instead.

Automated vs. manual progression. Automated canary pipelines advance the percentage automatically if metrics stay within thresholds, and halt if metrics breach thresholds. This requires defining acceptable ranges for error rate, latency, and any business metrics before the deployment starts. Manual progression lets the team review metrics at each step and decide to advance or halt. For critical systems, combining automated halt conditions with manual advance approval provides both safety and oversight.

Infrastructure: AWS CodeDeploy, Argo Rollouts, Spinnaker, and Flagger all support automated canary progressions with configurable metrics integration.

What to Monitor During a Canary Rollout

Monitoring is the operational core of canary deployment. The metrics you monitor determine whether you catch problems early or miss them until full rollout.

Error rate. Compare the error rate of the canary instances to the error rate of the stable instances in the same period. A canary error rate 2x or more above baseline is a strong signal to halt. Use the same percentile (e.g., p95, p99) for both; a canary that has a higher p99 error rate but lower p50 may have a specific edge case problem, not a general regression.

Latency. Compare p50, p95, and p99 latency for the canary and stable instances. A regression that adds 200ms to median response time will typically be visible at 5% traffic within 15-30 minutes on a high-traffic system.

Business metrics. For user-facing services, monitor conversion rate, transaction success rate, and session duration for users hitting the canary vs. the stable version. A new checkout page that looks correct technically but reduces conversion by 3% is a regression that error rate and latency metrics will not catch.

Resource utilization. CPU, memory, and connection pool utilization on the canary instances. A new version that has a memory leak will show increasing memory utilization in the canary before it causes an OOM on full rollout.

Set up dashboards that display these metrics split by deployment version (canary vs. stable) before you start the rollout. Trying to set up monitoring after you have started the rollout delays detection time.

Canary Deployment and Feature Flags

Canary deployment and feature flags are complementary techniques that operate at different levels.

Canary deployment routes a percentage of requests to a different version of the application at the infrastructure level. All users who hit the canary instances see the new behavior. The routing is by request, not by user identity.

Feature flags control behavior within a single version of the application at the code level. A feature flag can be enabled for a specific user, a specific account, a specific percentage of users, or a specific geographic region.

Combined: deploy the new version as a canary (5% of traffic), and within that canary, enable the new feature for 50% of users via a feature flag. This gives you a 2.5% exposure rate while maintaining user-level consistency (a specific user always sees the old behavior or always sees the new behavior, rather than randomly switching on each request).

This combination is the standard approach for testing high-risk user-facing changes in production. It reduces the blast radius to 2-5% of users, maintains behavioral consistency within sessions, and provides clean A/B data for evaluating the impact of the change.

Feature flags also enable instant disable without rollback. If a problem is detected with a specific feature, the flag can be turned off in under 60 seconds without re-deploying or routing traffic changes. This is faster than a canary rollback for changes where the problem is in a specific feature rather than in the infrastructure or overall application behavior.

Conclusion

Canary deployment reduces change failure rate by exposing new code to production traffic incrementally, with automatic halting conditions and fast rollback. For high-traffic systems, it is the standard approach to releasing changes that carry meaningful risk.

The investment required: weighted routing at the load balancer or service mesh level, monitoring dashboards split by deployment version, and defined thresholds for automatic halt conditions. Teams that have this infrastructure in place deploy changes that previously required two-week release cycles in daily canary rollouts, with change failure rates below 5% and mean time to recovery under 10 minutes.

Does your codebase have these problems? Let’s talk about your system