Blue-Green Deployment: How to Deploy Without Fear

How blue-green deployment works, when to use it, how to handle database migrations, and how to combine it with canary releases and feature flags.

In this article:

- How Blue-Green Deployment Works

- Setting Up a Blue-Green Pipeline

- Database Migrations with Blue-Green

- Blue-Green vs. Canary: When to Use Which

- Conclusion

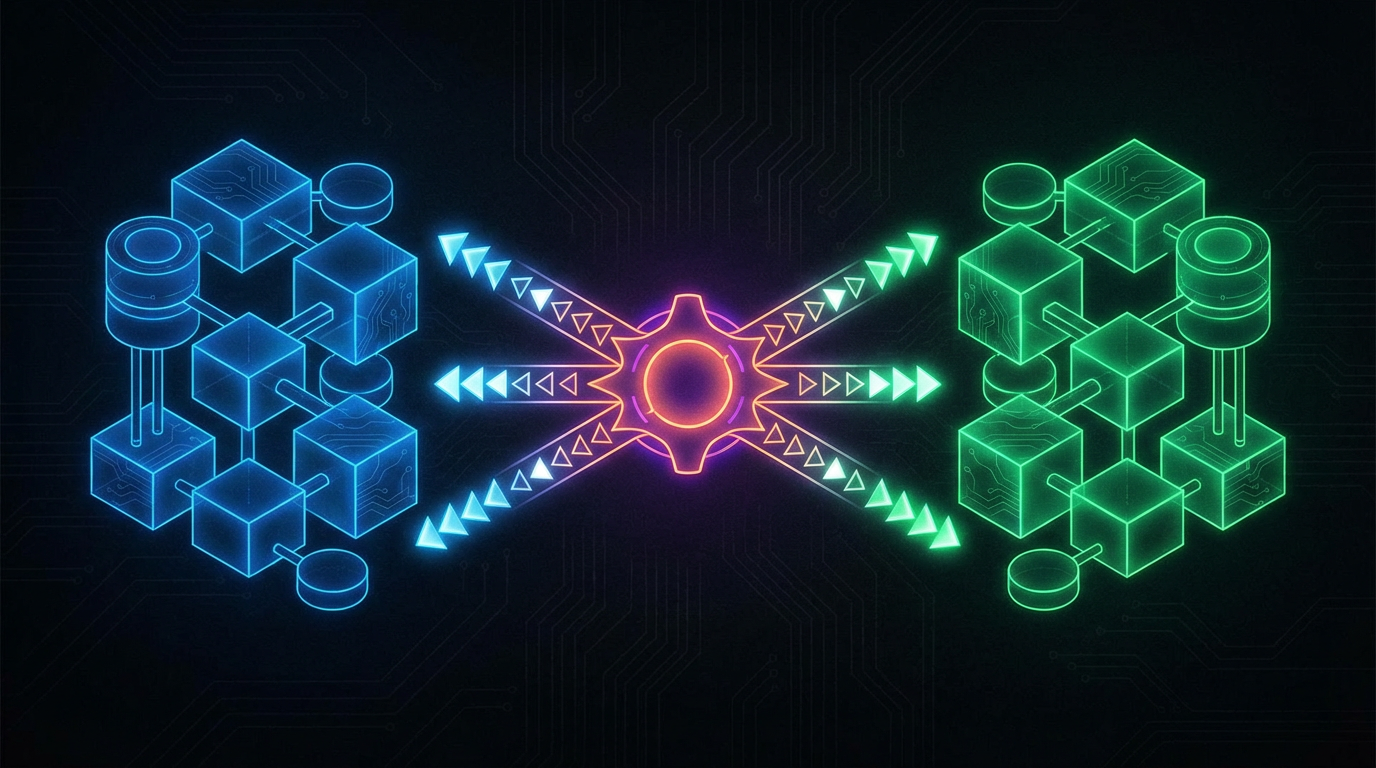

Blue green deployment is a release strategy that maintains two identical production environments and switches traffic between them. One environment is always live (receiving user traffic); the other is idle (available for the next deployment). When you are ready to release, you deploy to the idle environment, verify it, and then switch the load balancer to route all traffic to the newly deployed environment.

The result: deployments with near-zero downtime, instant rollback capability, and a stable environment for pre-production verification. Teams that implement blue-green deployment consistently report deployment frequency increasing from monthly to weekly or daily, and rollback times dropping from 30-60 minutes to under 2 minutes.

How Blue-Green Deployment Works

The two environments, called blue and green, are identical in configuration, infrastructure, and connectivity to shared services (databases, message queues, external APIs). At any given moment, one serves all production traffic; the other is idle.

The deployment process:

Step 1: Deploy to the idle environment. The new version of the application is deployed to whichever environment is currently idle. No user is affected because the idle environment receives no traffic.

Step 2: Verify the new version. Run automated tests, smoke tests, and health checks against the idle environment. This can include synthetic transaction tests that simulate real user flows. Because the environment is connected to the same database as production (or a snapshot thereof), it tests against realistic data.

Step 3: Switch traffic. Update the load balancer to route 100% of traffic to the newly deployed environment. The switch takes milliseconds. Users experience no downtime; requests in flight at the moment of the switch complete on the old environment (or are retried, depending on load balancer configuration).

Step 4: Monitor. Watch error rates, latency, and business metrics for 10-30 minutes after the switch. If metrics degrade, flip the load balancer back to the previous environment. Rollback is immediate and requires no re-deployment.

Step 5: Decommission the old environment (or leave it idle). Once the new deployment is stable, the old environment becomes the new idle environment, ready for the next deployment.

Setting Up a Blue-Green Pipeline

A minimal blue-green pipeline requires:

Two identical environments. If you are on cloud infrastructure (AWS, GCP, Azure), use infrastructure-as-code (Terraform, Pulumi) to ensure both environments are always in sync. Configuration drift between environments is a common failure cause: a setting that exists in blue but not in green means the verification step does not test what production will actually see.

A load balancer with routing control. AWS Application Load Balancer, GCP Cloud Load Balancing, Nginx, or HAProxy all support weighted routing that lets you switch traffic between target groups. The switch should be automatable from your CI/CD pipeline.

A deployment pipeline with gate steps. The pipeline deploys to the idle environment, runs verification, and only proceeds to the traffic switch if all gates pass. If any gate fails, the pipeline stops and alerts the team. The live environment is unaffected.

Smoke test suite. A small set of end-to-end tests that verify core user flows: can a user log in, can they complete a transaction, does the API respond correctly to key requests. These tests run against the idle environment after deployment and before the traffic switch.

Database Migrations with Blue-Green

The constraint on blue-green deployment is shared database state. Both environments point to the same database. A schema change that is compatible with the new application version but breaks the old one means you cannot roll back without also rolling back the schema.

The solution is the expand-contract pattern for database changes.

Never deploy a breaking schema change with a code change. Breaking changes go in a separate deployment that precedes the code deployment. The sequence:

- Deploy database migration (backwards-compatible: add column, add table). Both old and new code work with this schema.

- Deploy new application code that uses the new schema state.

- Deploy database migration to remove the old schema state (drop column, drop old index). Old code is no longer running.

This three-step sequence means every individual deployment is safe to roll back, because the schema at each step is compatible with the code at the adjacent steps.

For teams that do not yet have this process, the first priority is building the habit of separating schema migrations from code deployments. Even without a full blue-green infrastructure, this habit reduces deployment risk significantly. With blue-green infrastructure in place, it becomes the baseline requirement for using it safely.

Blue-Green vs. Canary: When to Use Which

Blue-green deployment switches 100% of traffic instantly. Canary deployment switches a small percentage first. The choice depends on the risk profile of the change.

Use blue-green when:

- The change is well-tested and the team is confident in the new version.

- The change affects all users uniformly, so there is no benefit to testing on a subset first.

- The team wants the simplest possible deployment process with fast rollback.

Use canary deployment when:

- The change has user-facing behavior changes that may interact with specific user segments or data patterns.

- The team wants to validate under real production load before full rollout.

- The change is to a high-traffic, high-stakes component where even a small error rate has significant impact.

The two strategies are complementary. Many teams use blue-green as the default and add canary routing for high-risk releases. Feature flags provide a third layer: behavior changes that need even more granular control can be decoupled from the deployment itself.

See the canary deployment guide for implementation details on percentage-based routing.

Conclusion

Blue-green deployment removes the fear from releasing. When rollback takes 90 seconds and the verification environment is identical to production, the deployment becomes a routine operation rather than a high-stress event.

Teams that implement blue-green deployment consistently reach deployment frequencies of 5-10 per week from monthly cadences, with change failure rates below 3%. The infrastructure investment is 1-2 weeks of engineering time. The return is measurable in the first month.

Does your codebase have these problems? Let’s talk about your system